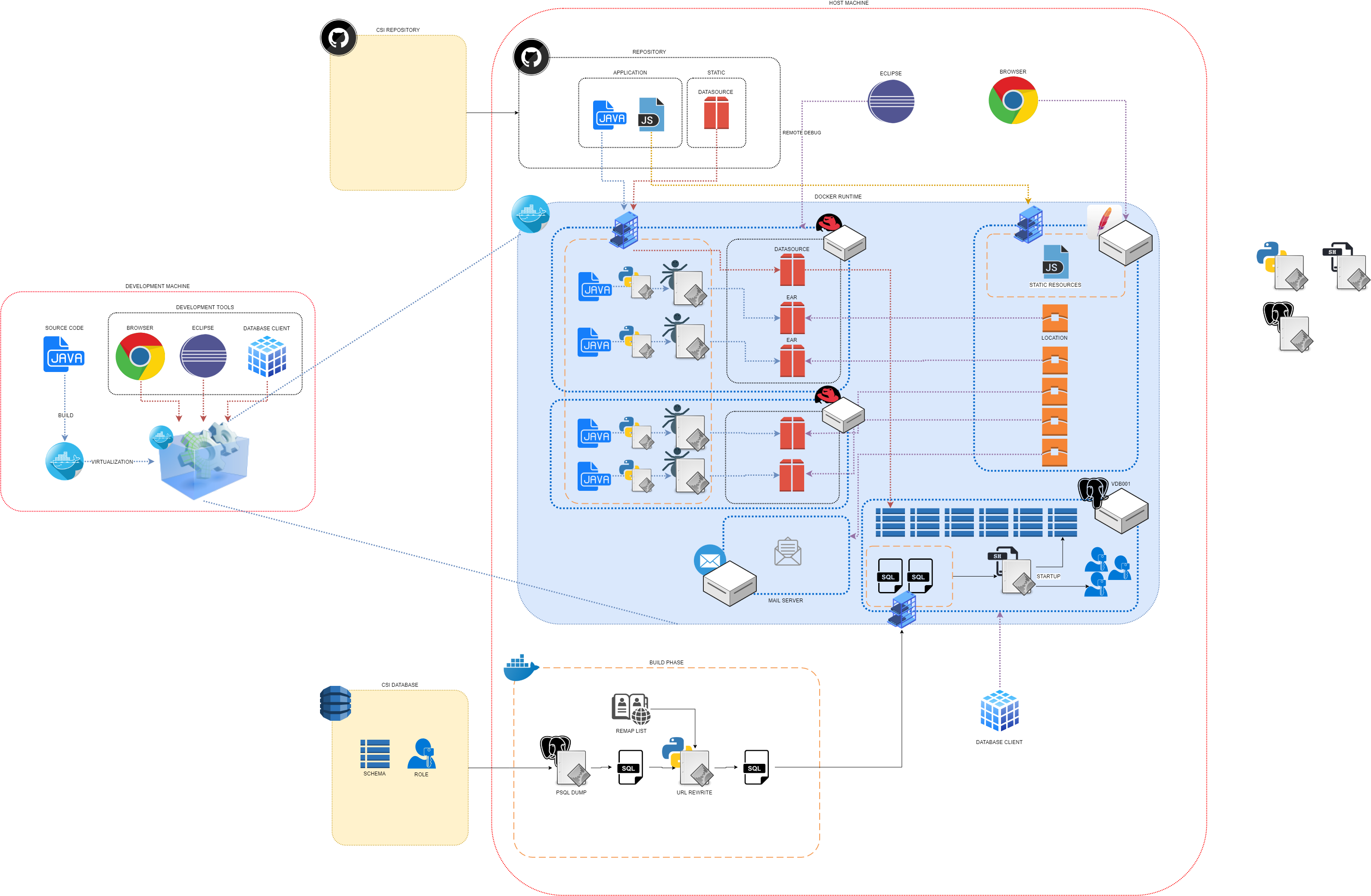

Debugging environments is hard. Development on shared environments is cumbersome. Automated testing on physical infrastructures is terribly unreliable.

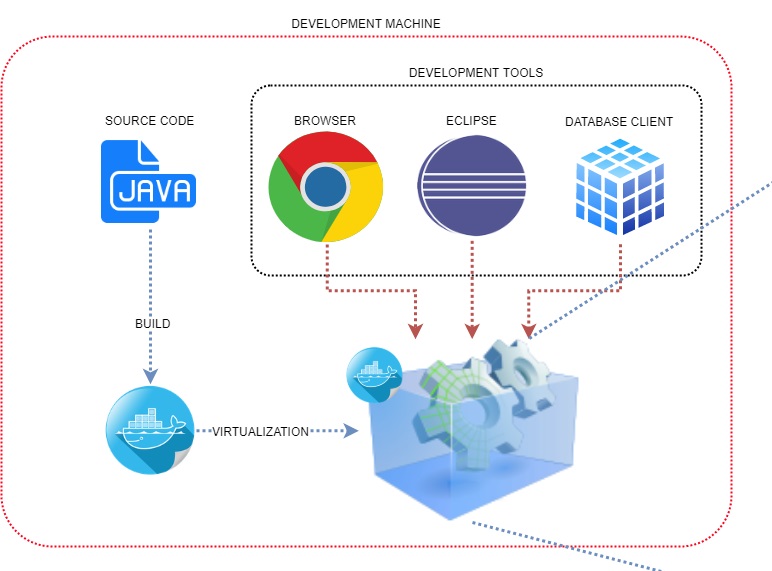

Virtualization technologies are extremely helpful and tackle all of the above problems by providing developers and testers with a on-demand local environment which is an exact copy of the production environment but self contained on the local machine: no development clash, no data loss, repeatable automated test flows all come for free.

What is this ?

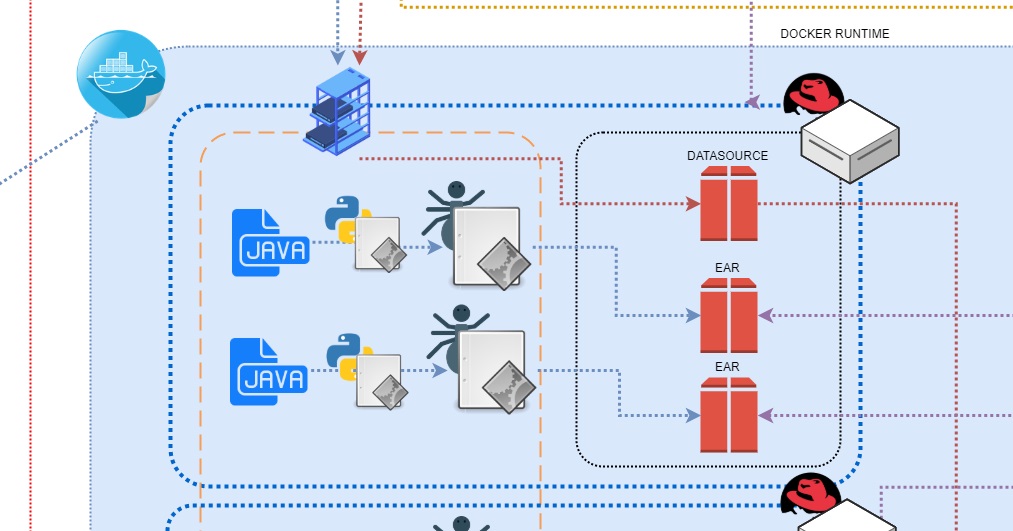

Docker (with docker-compose) is a virtualization engine able to create, manage, destroy, restore entire systems of virtual machines configured in order to emulate a very complex environment.

Why did I do this ?

Part of my job consists in building tools and planning flows to empower developers and testers with the best instruments in the best context.

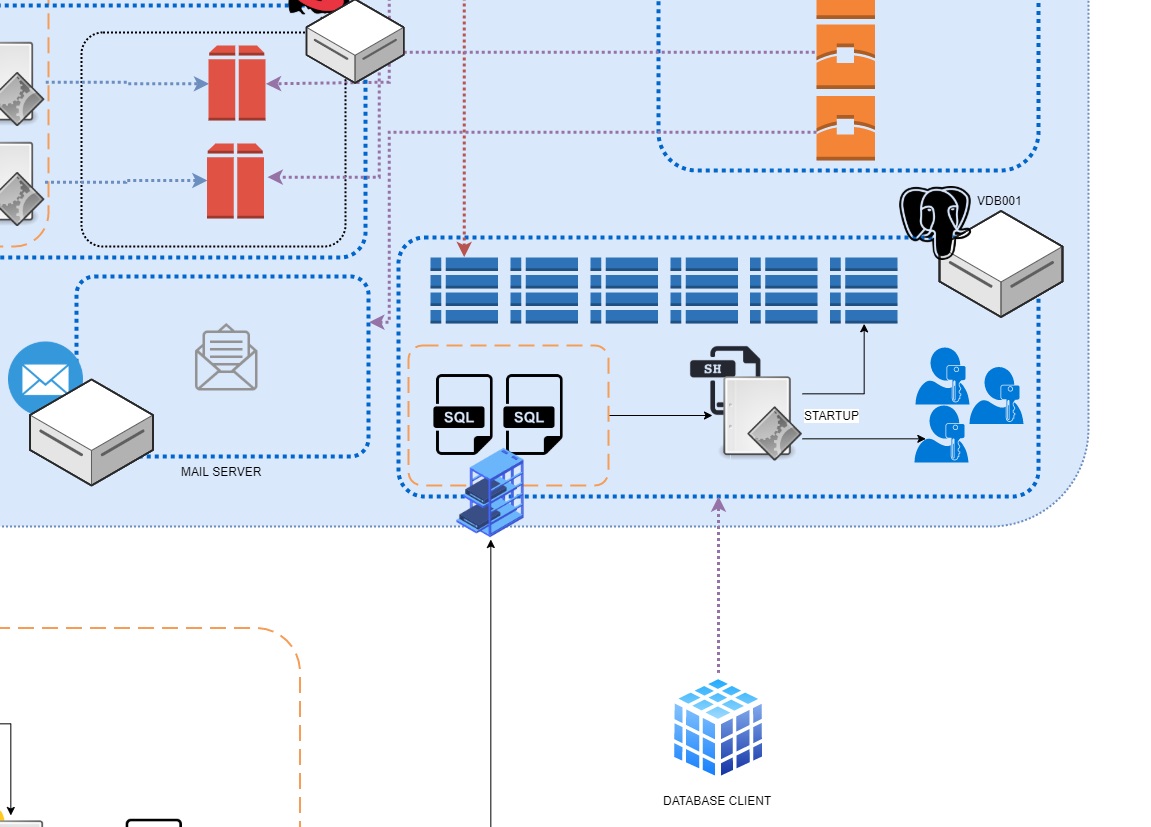

In my latest position we worked on a very complex environment: 12+ databases, 24 separated applications, various dispatching/queues, different and obsolete application servers, schedulers, jobs, scripts.

Working on the client’s test environments was very difficult as

- they were poorly maintained and of limited availability

- releases were difficult

- different developers would clash when updating the same database with different in-development software versions

- no automated testing could be performed because of forced data persistency

- partial application releases could not be performed without breaking everything for everyone

Development was very hard and often resulted in unjustifiable poor performances by our side.

So I decided to create an entire virtualized copy of our client’s environment.

How did I do this ?

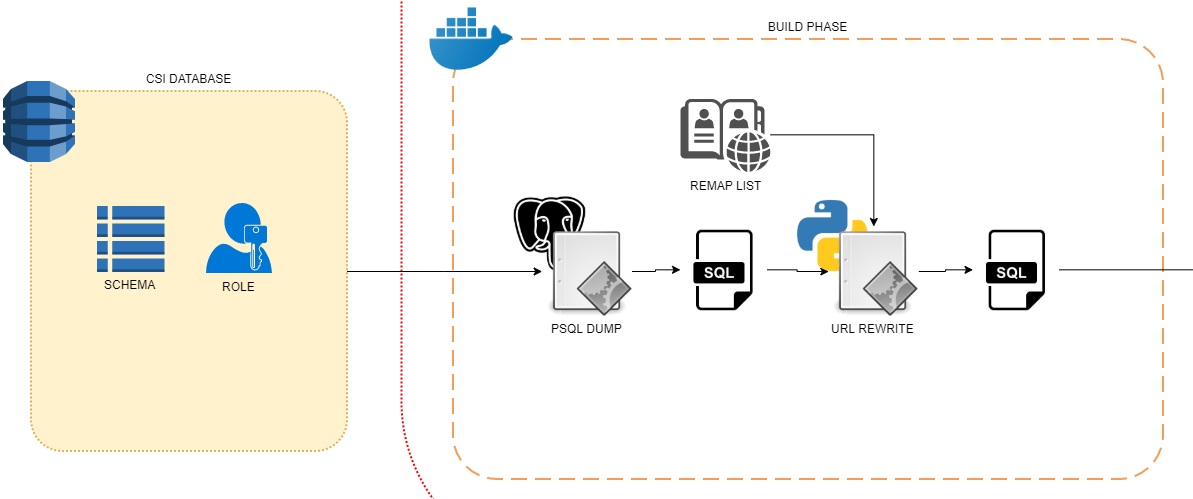

I solved this problems by creating an entire virtualized copy of our client’s environment, replicating on-demand every single database, application server, dispatching queue, scheduled job, apache servers, … on developers and testers local machines.

This required:

- Scouting of our client’s environment

- Reverse engineering of nodes, hardware configurations, queues, scheduling, internal network connections, frontend balancers and locations and so on

- Reengineering of involved applications to allow automated building

- Composition of virtual machines and networks using docker-compose

- Automation of remote database replication

- Translation of remote networking to virtualized networking

Trust me, it’s been a lot of work.

How would this be instrumental in a business environment ?

Doing this I solved a very complex and widespread problem and gave my team local environments on-demand with repeatable snapshotted status:

- no more clashing with other developers: everyone has his own system with his own databases

- everyone can test complex bugfixes and improvements on the whole environment before releasing a single file, thus leaving the test and production environments functional and available

- automated testing now possible: quit and restart the virtual environment and everything will reset, including databases

- efficient development workflow: the whole environment is on your machine

- automatic build of every application and every configuration: developer no longer need to rebuild and deploy packages in order to see their changes: included in the virtualization engine I provided is a watch engine that detects a file change as soon as the developer changes a single line of code, and automatically repackages and redeploys the application in the virtual environment in background. The developers only has to touch the code and the environment literally deploys itself

- want to preview how a different branch of your application will integrate with the production system? Checkout the dedicated branch of the application and it will automatically deploy itself in the virtual environment

Sleep the night again!

Can I test this?

We can talk about this by email.